🚨 BREAKING | ORIGINAL INVESTIGATION | HEALTH & MEDICINE

March 9, 2026

ABSTRACT: Medical diagnosis is often portrayed as a rigorous exercise of scientific reasoning. Yet decades of peer-reviewed research reveal a more unsettling reality: physician decision-making is systematically contaminated by cognitive biases rooted in subjective experience, ego, ideology, and emotion — not evidence. The overall rate of incorrect diagnoses in healthcare has been estimated at 10–15%, with cognitive factors implicated in up to 96% of emergency medicine errors. This investigation synthesizes landmark psychology and medical literature on cognitive bias, connects these findings to the universal human tendency to interpret reality through the distorting lens of personal experience, and presents a detailed case study on how political ideology drove measurably divergent COVID-19 vaccine recommendations among U.S. physicians. Finally, it argues that artificial intelligence — operating without ego, without fear, without political affiliation — represents a structurally superior decision-making architecture for evidence-based medicine, while candidly acknowledging AI's own emerging limitations.

Section I: The Nature of Human Judgment — A Dual-Process Architecture

The human brain did not evolve to evaluate clinical evidence. It evolved to survive predators, navigate social hierarchies, and recognize patterns quickly enough to stay alive. The cognitive machinery that kept our ancestors from being eaten by lions is the same machinery physicians deploy when deciding whether a patient's chest pain is anxiety or a pulmonary embolism.

1.1 Dual-Process Theory: System 1 and System 2

The foundational framework for understanding human cognitive error in medicine derives from dual-process theory, most prominently articulated by Nobel laureate Daniel Kahneman in his landmark 2011 work, Thinking, Fast and Slow. Kahneman describes two cognitive systems that operate in parallel:

SYSTEM 1 (Intuitive / Fast) | SYSTEM 2 (Analytical / Slow) |

|---|---|

Automatic, subconscious | Deliberate, conscious |

Relies on heuristics (mental shortcuts) | Relies on logic and evidence evaluation |

Rapid — milliseconds to seconds | Slow — minutes to hours |

Highly susceptible to cognitive bias | Resistant to bias when fully engaged |

Dominates 95%+ of daily decisions | Engaged only under deliberate effort |

Primary driver of clinical heuristics | Engaged when physicians 'take a time-out' |

"System 1 can be influenced by multiple factors, many of them subconscious — emotional polarization toward the patient, recent experience with the diagnosis being considered, specific cognitive or affective biases. System 2 overrides System 1 only when physicians take a time-out to reflect on their thinking." — Ely et al., Dual Process Theory Model, BMC Medical Informatics & Decision Making, 2016

In clinical settings, System 1 dominates. An experienced physician walks into a room, takes in a patient's appearance, posture, speech, and reported symptoms, and within seconds has formed an initial diagnostic hypothesis. This intuitive leap is efficient — and it is also deeply fallible.

1.2 The Lens of Self: Egocentric Bias and the Constructed Reality

Philosophers from Plato to Kant have grappled with a central epistemological problem: we do not perceive reality directly. We perceive a construction of reality assembled by our brains from incomplete sensory data, filtered through prior experience, expectation, and emotion. In clinical medicine, this has a direct operational consequence: the physician who walks into an exam room does not see the patient in front of them — they see a projection of every patient they have ever treated, every case they have ever read, every belief they have ever formed.

This is the phenomenon psychologists call egocentric bias — the systematic tendency to interpret the world through the narrow aperture of one's own experience. Social psychologist Lee Ross coined the related term "fundamental attribution error" in 1977 to describe the universal tendency to overweight personal dispositional factors over situational factors when evaluating other people. In medicine, this manifests as the physician who looks at an overweight patient with back pain and concludes "deconditioning" rather than ordering an MRI.

"Physicians who see a patient through the lens of their own experience, limitations, and pre-existing mental models may unconsciously favor interpretations that match their internal constructs rather than adapting to new data." — O'Sullivan, E.D. & Schofield, S.J. (2018). Cognitive bias in clinical medicine. Journal of the Royal College of Physicians of Edinburgh, 48(3), 225–232

Section II: A Taxonomy of Clinical Cognitive Biases

A 2018 review by O'Sullivan and Schofield catalogued more than 100 distinct cognitive biases documented in healthcare settings. The following are most directly linked to the "ego-driven assumption" phenomenon — the tendency to see not what is there, but what one expects to see.

Cognitive Bias | Description | Prevalence |

|---|---|---|

Overconfidence Bias | Inflated belief in diagnostic accuracy | 60% |

Anchoring Bias | Over-reliance on initial impression | 52% |

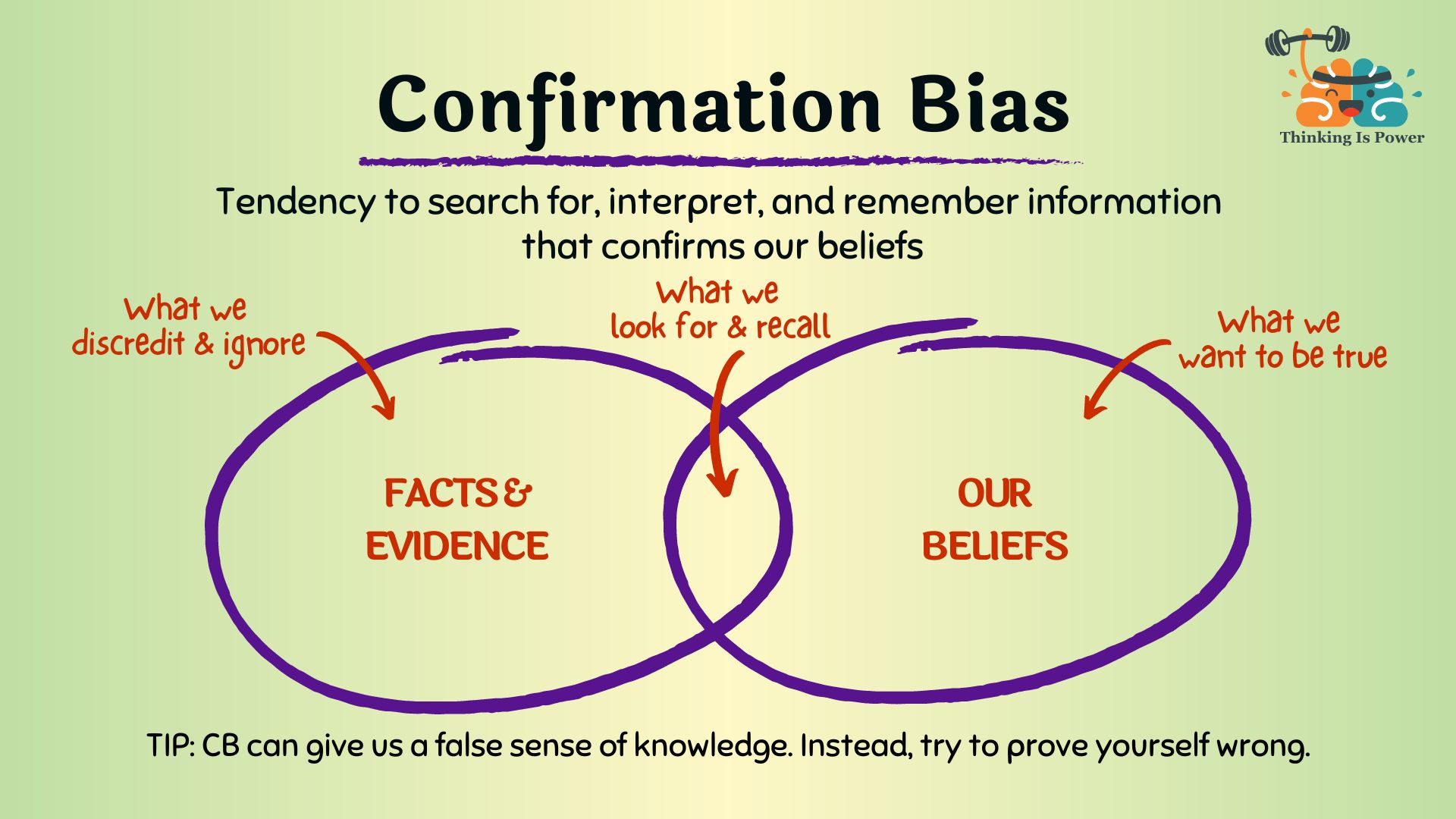

Confirmation Bias | Seeking confirming evidence only | 48% |

Availability Bias | Overweighting recent diagnoses | 42% |

Ascertainment Bias | Stereotyping on demographics/history | 38% |

Premature Closure | Stopping diagnostic search too early | 35% |

Commission Bias | Tendency to act rather than observe | 28% |

Source: Graber et al. (2005); O'Sullivan & Schofield (2018); Saposnik et al. (2016), BMC Medical Informatics & Decision Making.

2.1 Confirmation Bias

Confirmation bias is the selective gathering and interpretation of evidence consistent with current beliefs while ignoring contradictory data. In medicine, it manifests when a physician refuses to abandon an initial diagnosis despite accumulating counter-evidence. As the AMA Journal of Ethics documented in 2020, this bias can produce "diagnostic momentum" — a misdiagnosis formed early in the clinical pathway that gets passed from physician to physician without re-examination.

"Confirmation bias leads physicians to see what they want to see. Since it occurs early in the treatment pathway, it can cause mistaken diagnoses to be passed on to and accepted by other clinicians without their validity being questioned — a process referred to as diagnostic momentum." — AMA Journal of Ethics, September 2020 — 'Believing in Overcoming Cognitive Biases'

2.2 Anchoring Bias

Anchoring bias occurs when a physician fixates on an initial data point — the first lab value, the referral note, the triage nurse's assessment — and fails to adequately adjust when subsequent evidence contradicts it. The PMC systematic review by Saposnik et al. (2016) found anchoring effects associated with diagnostic errors in 51% of evaluated case scenarios, compared to just 16.4% of errors not attributable to cognitive biases (p = 0.029).

2.3 Availability Bias

Availability bias distorts diagnostic reasoning by causing physicians to overweight diagnoses they have recently encountered or read about. A physician who recently treated three consecutive patients with pulmonary embolism will order CT pulmonary angiograms at a higher rate in the weeks that follow — not because the base rate of PE has changed, but because their cognitive architecture has made that diagnosis more "available."

2.4 Overconfidence Bias

Perhaps the most dangerous of all clinical biases, overconfidence reflects an inflated belief in one's own diagnostic accuracy. Saposnik et al. (2016) found overconfidence present in 50–70% of study participants. A 2022 Japanese study of 387 emergency physicians identified overconfidence as the most common cognitive error category, present in 22.5% of memorable diagnostic errors.

2.5 Ascertainment Bias and Implicit Stereotyping

Ascertainment bias involves clinical thinking shaped by prior expectations about patient demographics — their gender, race, socioeconomic status, or behavioral history. It is the mechanism by which a disheveled patient presenting with altered mental status gets attributed to drug intoxication rather than evaluated for diabetic ketoacidosis, stroke, or subdural hematoma.

Section III: The Psychology Behind the Lens — Landmark Studies

The tendency to see the world through the distorting lens of one's own experience is not a medical phenomenon — it is a fundamental property of human cognition. The following landmark studies from experimental psychology establish the scientific foundation.

3.1 The Asch Conformity Experiments (1951–1956)

Solomon Asch's foundational conformity experiments at Swarthmore College demonstrated that 75% of participants would give a demonstrably incorrect answer at least once when surrounded by confederates providing the wrong answer. In clinical medicine, the "confederates" are the admitting diagnosis, the referral note, and the institutional culture.

3.2 The Milgram Obedience Studies (1963)

Stanley Milgram's obedience experiments at Yale revealed that 65% of participants would administer what they believed were dangerous electric shocks to a stranger when instructed by an authority figure. In medicine, the authority figure is institutional hierarchy, pharmaceutical company influence, or the social consensus of one's medical community.

3.3 The Dunning-Kruger Effect (1999)

In their landmark 1999 study, David Dunning and Justin Kruger demonstrated that incompetent individuals systematically overestimate their own competence — and that this metacognitive failure is precisely what prevents them from recognizing their incompetence. In clinical medicine, this produces a paradox: the physician most likely to miss a diagnosis is also the physician least likely to recognize they might be missing it.

"Unskilled and unaware of it: how difficulties in recognizing one's own incompetence lead to inflated self-assessments." — Kruger, J. & Dunning, D. (1999). Journal of Personality and Social Psychology, 77(6), 1121–1134

3.4 Kahneman & Tversky: Prospect Theory and Framing Effects (1979)

Kahneman and Tversky's Nobel Prize-winning work on Prospect Theory demonstrated that human judgment is not calibrated to objective probability — it is calibrated to perceived gains and losses relative to a subjective reference point. The same treatment recommendation will be accepted or rejected by the same physician depending entirely on whether it is framed in terms of survival rates versus mortality rates.

3.5 The Implicit Association Test and Unconscious Racial Bias in Medicine

Green et al.'s landmark 2007 study using the Implicit Association Test on 287 medical residents found that the majority held implicit preferences for White patients over Black patients — preferences directly correlated with divergent treatment recommendations for identical presenting symptoms. The 2015 National Academies review, Unequal Treatment, synthesized over 100 studies documenting systematic disparities in pain assessment, cardiac catheterization referral, and analgesic prescribing attributable to physician implicit bias.

Section IV: Quantifying the Damage — The Scale of Diagnostic Error

Cognitive bias is not an abstract philosophical concern. It kills people. The following data from peer-reviewed sources quantify the clinical and economic consequences of bias-driven diagnostic error.

Metric | Finding |

|---|---|

Overall misdiagnosis rate (clinical settings) | 10–15% of all diagnoses |

Cognitive factors in ER misdiagnoses | Up to 96% of cases |

Outpatient misdiagnosis rate | ~5% of consultations |

Cognitive bias in diagnostic inaccuracies | 36.5–77% of case scenarios |

Overconfidence prevalence in physicians | 50–70% of study participants |

Bias-linked errors vs. non-bias errors | 51% vs. 16.4% (p=0.029) |

Annual U.S. deaths from diagnostic error | ~40,000–80,000 (est.) |

Annual U.S. cost of diagnostic error | >$100 billion (est.) |

Sources: PMC 8520040 (O'Sullivan & Schofield 2018); PMC 5093937 (Saposnik et al. 2016); Institute of Medicine, To Err is Human (2000); Singh et al. (2013), BMJ Quality & Safety; National Academies of Sciences (2015).

Section V: Case Study — The COVID-19 Vaccine and Ideologically Driven Clinical Decisions

Perhaps no event in modern medical history so vividly exposed the contamination of clinical reasoning by cognitive bias as the COVID-19 pandemic. What should have been a straightforward exercise in evidence-based medicine became instead a politically polarized battlefield in which the same physicians, reviewing the same evidence, reached diametrically opposite clinical conclusions based on their political ideology, media consumption habits, and pre-existing worldview.

5.1 The PNAS Study: Political Ideology Colors Scientific Evaluation

The most rigorous documentation of this phenomenon was published in the Proceedings of the National Academy of Sciences (PNAS) in February 2023 by researchers from the University of Pittsburgh and Harvard Kennedy School. The study recruited 592 critical care physicians and 900 laypeople surveyed between April 2020 and April 2022.

"Political ideology colors the evaluation of scientific evidence to a greater degree when it pertains to a politicized treatment." — Minson et al. (2023), PNAS 120(7) — 'The Political Polarization of COVID-19 Treatments Among Physicians and Laypeople in the United States'

The findings were stark. Conservative physicians were approximately five times more likely than their liberal and moderate colleagues to say they would treat a hypothetical COVID-19 patient with hydroxychloroquine. This polarization was driven by partisan cable news consumption — not by exposure to scientific research.

5.2 Ideological Divergence in Vaccine Recommendations

Physician Political Orientation | COVID Vaccine Enthusiasm | Hydroxychloroquine Recommendation Rate |

|---|---|---|

Liberal / Progressive | High | ~1x baseline |

Moderate / Centrist | Moderate-High | ~2x baseline |

Conservative / Right-Leaning | Moderate-Low | ~5x baseline |

Source: Minson et al. (2023), PNAS 120(7); Harvard Kennedy School Faculty Research Summary.

5.3 The Mechanics of Political Cognitive Bias in COVID Vaccine Decisions

The mechanism by which political ideology contaminated vaccine recommendation follows a predictable cognitive pathway:

Identity-protective cognition: Kahan (2017) documented that individuals process scientific evidence in a biased fashion that supports their own political group identity. A physician who perceives the COVID vaccine as politically "liberal" will unconsciously apply more critical scrutiny to safety data.

Framing bias: When COVID-19 is framed as a hoax — as it was by dominant conservative media outlets — physicians exposed to that framing disproportionately discount the severity data that would otherwise drive vaccine urgency.

Social proof and network effects: Unvaccinated Republican social contacts had a particularly strong dampening effect on individual vaccine confidence. Physicians are not immune to the social proof dynamics of their professional and personal networks.

Authority bias inverted: Political polarization caused many conservative physicians to treat government health agency recommendations not as evidence but as politically motivated directives to be resisted.

Availability bias amplified: Rare adverse events following COVID vaccination received outsized coverage in conservative outlets, dramatically increasing their cognitive availability and distorting individual physician risk calculations.

5.4 The Measurable Public Health Consequence

This ideologically driven clinical divergence was not merely an abstract philosophical concern — it produced measurable mortality. Wallace, Goldsmith-Pinkham & Schwartz (2022) found that Republicans' reduced likelihood of getting the COVID-19 vaccine was associated with a significantly higher death rate than Democrats' once the vaccine was widely available — a disparity that accelerated through the Delta and Omicron waves.

Section VI: The Structural Advantages of AI in Clinical Decision-Making

If human cognitive bias is the disease, what is the cure? The emerging literature on artificial intelligence in clinical decision-making offers a compelling — if nuanced — answer. AI systems do not experience fear, ambition, political affiliation, or ego. They do not have a bad night's sleep before a difficult shift. They do not anchor on the first diagnosis they read in the chart.

6.1 Empirical Evidence: AI Diagnostic Performance

Study / Source | Finding | Clinical Domain |

|---|---|---|

Hoppe et al. (2024), JMIR | GPT-4 outperformed ED resident physicians in diagnostic accuracy | Emergency Medicine |

MDPI Bioengineering (2025) | AI scored 72–96% vs. physicians' 46–62% on medical exams (p<0.001) | General Medicine |

Takita et al. (2025), npj Digital Medicine | AI matched non-expert physician performance across 83 studies | Multi-domain |

PMC Meta-Analysis (2025), Dermatology | AI non-inferior or superior to dermatologists in 30 of 38 studies | Dermatology |

JMIR Medical Informatics (2025) | LLMs analyzed 4,762 cases across 19 models vs. 193 physicians | Multi-specialty |

6.2 Why AI Is Structurally Less Biased Than Human Clinicians

No ego: AI does not have a professional reputation to protect or a prior diagnosis to defend. It can update its probability estimates continuously as new evidence arrives without psychological discomfort.

No emotional polarization: AI does not develop counter-transference toward difficult patients and does not attribute suffering differently based on whether a patient is likeable.

No political ideology: As the PNAS COVID study demonstrated, physician political orientation was a stronger predictor of treatment recommendation than clinical training. AI has no political identity.

No availability contamination: AI pattern recognition is calibrated to population-level base rates rather than the skewed sample of cases a single physician has recently encountered.

No fatigue: Human cognitive bias increases dramatically under fatigue and time pressure — the precise conditions most common in clinical settings. AI performance is invariant to shift length or overnight call.

6.3 The Socratic Machine — AI as Tireless Interlocutor

There is a deeper argument for AI augmentation that goes beyond diagnostic accuracy statistics. The Socratic method — the discipline of systematically questioning assumptions, demanding evidence for claims, and refusing to accept conclusions not derived from rigorous reasoning — is precisely what human cognitive architecture resists under stress.

An AI clinical decision support system functions as a perpetual Socratic interlocutor: one that asks, without embarrassment or hierarchy, "Have you considered alternative diagnoses? What is the base rate of this condition in this demographic? Your initial hypothesis was formed before the lab results returned — does the new data support it?" This is not a replacement for the physician. It is the thing physicians need most: a tireless, ego-free colleague whose only interest is the evidence.

Section VII: The Limitations — AI Is Not Unbiased, It Is Differently Biased

Intellectual honesty requires acknowledging that AI is not a cognitive bias elimination machine. It is a system with different — and in some respects, equally serious — failure modes.

7.1 Training Data Bias

AI systems learn from historical medical data. If that data reflects decades of racially biased treatment patterns — which it does — the AI will learn and perpetuate those patterns at scale.

7.2 The Hallucination Problem

Current large language models are known to generate plausible-sounding but factually incorrect clinical information with a confidence that may exceed a human physician's willingness to challenge it.

7.3 The Expert Performance Gap

The meta-analysis by Takita et al. (2025) found that while AI matched non-expert physician performance, it performed significantly worse than expert physicians (p = 0.007). AI's advantage is most pronounced when compared to average or below-average clinical reasoners — not against the physician already applying rigorous System 2 thinking.

7.4 The Optimal Model: Human-AI Collaboration

Human Clinician Strengths | AI System Strengths |

|---|---|

Empathy and therapeutic rapport | Consistent, ego-free evidence processing |

Physical examination capability | No fatigue or emotional contamination |

Cultural and contextual nuance | Population-level base rate calibration |

Procedural and surgical skill | Instant synthesis of vast medical literature |

Ethical judgment and patient values navigation | No political or ideological bias |

Adaptive reasoning under uncertainty | Invariant performance across time and volume |

Section VIII: Conclusion — The Examined Diagnosis

"The unexamined life is not worth living." — Socrates, as reported by Plato in the Apology (399 BC)

Socrates' insistence on rigorous self-examination — on questioning assumptions, exposing hidden premises, and submitting every belief to the scrutiny of evidence — was not merely a philosophical posture. It was a survival strategy against the most dangerous human tendency: mistaking familiarity for truth, and personal experience for universal law.

The physician who walks into an exam room carrying decades of personal experience, political convictions, media consumption habits, and unconscious demographic assumptions does not see the patient in front of them as they are. They see a projection of everything they already believe. This is not a character flaw — it is the cognitive condition of every human being, including the most brilliant physician ever trained.

The COVID-19 vaccine controversy provided the most visible and measurable demonstration of this reality in modern medical history. Identical clinical evidence, evaluated by trained physicians, produced diametrically opposite recommendations — based not on scientific reasoning but on political identity. People died as a consequence.

Artificial intelligence will not solve this problem. But it may be, for the first time in human medicine, a reliable mechanism for slowing the System 1 impulse long enough for System 2 to engage. A tireless, politically neutral, ego-free Socratic interlocutor asking, over and over, the question that saves lives: "Are you sure? What does the evidence actually say?"

"We cannot solve our problems with the same thinking we used when we created them." — Albert Einstein

References

Asch, S.E. (1951). Effects of group pressure upon the modification and distortion of judgment. In H. Guetzkow (Ed.), Groups, Leadership, and Men. Carnegie Press.

Croskerry, P. (2002). Achieving quality in clinical decision making: Cognitive strategies and detection of bias. Academic Emergency Medicine, 9(11), 1184–1204.

Dunning, D. & Kruger, J. (1999). Unskilled and unaware of it. Journal of Personality and Social Psychology, 77(6), 1121–1134.

Gopal, D.P. et al. (2021). Implicit bias in healthcare: Clinical practice, research and decision making. Future Healthcare Journal, 8(1), 40–48.

Graber, M.L., Franklin, N. & Gordon, R. (2005). Diagnostic error in internal medicine. Archives of Internal Medicine, 165(13), 1493–1499.

Green, A.R. et al. (2007). Implicit bias among physicians and its prediction of thrombolysis decisions. Journal of General Internal Medicine, 22(9), 1231–1238.

Harvard Kennedy School. (2023). Doctors' political beliefs influence COVID-19 treatment recommendations. hks.harvard.edu.

Hoppe, J.M. et al. (2024). ChatGPT with GPT-4 outperforms emergency department physicians in diagnostic accuracy. Journal of Medical Internet Research, 26, e56110. PMC11263899.

Institute of Medicine. (2000). To Err is Human: Building a Safer Health System. National Academies Press.

Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

Kahneman, D. & Tversky, A. (1979). Prospect theory: An analysis of decision under risk. Econometrica, 47(2), 263–291.

Kahan, D.M. (2017). Misconceptions, Misinformation, and the Logic of Identity-Protective Cognition. Yale Law School Cultural Cognition Project Working Paper No. 164.

Milgram, S. (1963). Behavioral study of obedience. Journal of Abnormal and Social Psychology, 67(4), 371–378.

Minson, J.A. et al. (2023). The political polarization of COVID-19 treatments among physicians and laypeople in the United States. PNAS, 120(7), e2216179120.

National Academies of Sciences, Engineering, and Medicine. (2015). Unequal Treatment: Confronting Racial and Ethnic Disparities in Health Care. National Academies Press.

O'Sullivan, E.D. & Schofield, S.J. (2018). Cognitive bias in clinical medicine. Journal of the Royal College of Physicians of Edinburgh, 48(3), 225–232. PMC8520040.

Ross, L. (1977). The intuitive psychologist and his shortcomings. Advances in Experimental Social Psychology, 10, 173–220.

Saposnik, G. et al. (2016). Cognitive biases associated with medical decisions: A systematic review. BMC Medical Informatics and Decision Making, 16(1), 138. PMC5093937.

Singh, H. et al. (2013). Types and origins of diagnostic errors in primary care settings. JAMA Internal Medicine, 173(6), 418–425.

Takita, H. et al. (2025). A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians. npj Digital Medicine. PMC11929846.

Wallace, J., Goldsmith-Pinkham, P. & Schwartz, J.L. (2022). Excess death rates for Republican and Democrat registered voters during the COVID-19 pandemic. NBER Working Paper 30512.